The High Stakes of 2026 Healthcare AI

In the landscape of 2026, building a healthcare chatbot is no longer just a "feature request" it is a high-stakes engineering challenge. With the full implementation of HIPAA 2.0, the Department of Health and Human Services (HHS) has moved from "addressable" guidelines to strict technical mandates.

As Technical Architects, we can no longer simply wrap an LLM API and call it a day. We need a system that is Secure by Design. This guide explores the definitive architecture for building production-ready, HIPAA-compliant AI using Next.js.

1. The Architect’s Verdict: Why Next.js and Not Just React?

A common question from stakeholders is: "Can't we just build this in standard React?" In 2026, the answer is a firm No. While React is a brilliant UI library, it is inherently client-side. For HIPAA compliance, the client-side is your greatest vulnerability.

Why Next.js is the "Security King":

- The Zero-Trust Client: In a standard React SPA, the browser handles API calls and stores state in memory. In Next.js, React Server Components (RSC) allow us to process data on the server and send only the final, safe HTML to the client. The browser never sees the raw Protected Health Information (PHI).

- The "Server-Only" Guard: Next.js provides the server-only package. We use this to ensure that our medical scrubbing logic and EHR (Electronic Health Record) connection strings can never be leaked to a client-side bundle by mistake.

- Hidden Network Traces: Standard React apps expose API endpoints in the "Network" tab of the browser. Next.js Server Actions keep your AI credentials and backend logic completely invisible to the end-user.

- XSS Protection: Next.js simplifies the use of HttpOnly secure cookies. Standard React apps often fall into the trap of using localStorage for tokens, which is a massive HIPAA liability due to Cross-Site Scripting (XSS) risks.

2. The 2026 "Zero-Knowledge" Tech Stack

To satisfy a modern auditor, every layer of your stack must be backed by a Business Associate Agreement (BAA).

3. Implementation: The Secure Data Pipeline

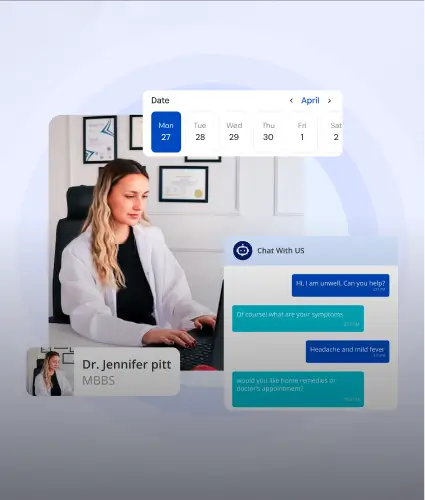

A compliant chatbot follows a strict four-step lifecycle for every single message to ensure the "Minimum Necessary" standard is met.

Step 1: The "Scrubber" (Server Action)

Before the text leaves your VPC, it must pass through a redaction layer.

Step 2: The AI Handshake (With ZDR)

Your Server Action forwards the scrubbed prompt to a HIPAA-eligible LLM. You must ensure Zero-Data Retention (ZDR) is toggled "ON." This prevents the provider from using your patient data to train their future models.

4. Code Example: A Secure Server Action

This is the bridge between your React frontend and the secure backend. Note how the sensitive work happens entirely on the server.

5. The Architect’s "Common Traps" Checklist

Avoid these expensive mistakes that lead to compliance failure:

- The Logging Leak: Standard logging tools (Sentry, LogRocket) often capture input fields. You must disable auto-capture on all text areas to prevent PHI from landing in your error logs.

- The Streaming Risk: If you stream AI responses, implement a "Last-Mile Redactor" on the server. If the AI "hallucinates" a patient identifier, the redactor must kill the stream immediately.

- The Inactivity Gap: HIPAA 2.0 requires active session termination. Use a useIdleTimer hook in your React components to trigger a server-side logout after 10 minutes of inactivity.

Conclusion: Compliance is a Culture, Not a Checklist

In 2026, the success of a Healthcare AI product isn't measured by how "smart" the chatbot is, but by how invulnerable the data remains. By using Next.js as your security backbone, you aren't just checking a compliance box; you are building a fortress of trust.

Build for privacy. Architect for trust.

.svg)

.svg)

.svg)

.svg)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)